BIG DIVE 7

from June 18th to July 13th 2018 | Turin, Italy

from June 18th to July 13th 2018 | Turin, Italy

A complete data science program

BIG DIVE 7 covered:

Real cases that prepare you to work with data

The highest value is that you learn through hands-on real cases, this means you can get insights from data-driven companies and research institutions on how they managed, analyzed and inferred on their own data and you have the possibility to apply and play with their methodologies asking directly to data scientists, researchers and developers.

This is a unique opportunity that you can’t get anywhere else!

Click on the boxes below for a preview of the program:

Teacher: Renato Gabriele — Oohmm

A journey through years of advancement in the big data analysis related with the computational propaganda, crisis, disaster, social events or general elections, researching for new models, analysis covering data and metadata if “polluted”, by multiple kind of interests and sources.

Renato Gabriele will talk about a 5-year research project on social and web metadata, following the required innovation to connect data analysis with digital humanities to better understand complex big data.

We’ll end with an open discussion on the matrix of usual mistakes in approaching critical data analysis, and related unexpected effects.

Duration: Talk.

#bigdata #propaganda #research #digitalhumanities

Teacher: Fabio Franchino — TODO

Immersive lecture on the key elements and concepts behind data visualization.

The workshop is an immersive tutorial about how to use the JavaScript open source library D3.js to represent data and to create customized and animated diagrams and charts.

Duration: 1.5 days.

Prerequisites: HTML, CSS, previous experience with JavaScript is welcome.

#dataviz #datavisualization #d3js #javascript

Teacher: Stefania Delprete — TOP-IX

Interactive lessons using Jupyter Notebooks on Python and its most used libraries for data science: NumPy, Pandas, Matplotlib, and an initial Scikit-learn exposure. Plus you’ll get clear on what’s inside the Anaconda and SciPy ecosystems.

This session will include insights of the history and future of the open source libraries, how to contribute and participate to the community events.

Stickers for all the participants provided by Python Software Foundation and NumFOCUS.

Duration: 1.5 days.

Prerequisites: Exposure to Python and Jupyter Notebooks.

#datascience #python #numpy #pandas #matplotlib #scipy #scikitlearn

Teacher: Alessandro Molina — AXANT

Nowadays more and more data is generated by companies and software products, especially in the IoT world records are saved with a throughput of thousands per second.

That requires solutions able to scale writes and perform real time cleanup and analysis of thousands of records per second and MongoDB is getting wildly used in those

environments in the role of what’s commonly named “speed layers” to perform fast analytics over the most recent data and adapt or cleanup incoming records.

This session aims to show how MongoDB can be used as a primary storage for your data, scaling it to thousand of records and thousand of writes per second while also acting as a real-time analysis and visualization channel thanks to change streams and as a flexible analytics tool thanks to the aggregation pipeline and MapReduce.

Duration: 1 day.

Prerequisites: Python, JavaScript.

#mongodb #realtime #scaling #mapreduce

Teacher: Nicolò Bidotti — AgileLab

Big Data analysis is a hot trend and one of its major roles is to give new value to enterprise data. However data and information lose value as they become old, so it is important in a lot of contexts to do near real-time analysis of incoming data flows. Apache Spark is a major actor in the big data scenario and with its Streaming module aims to solve the main challenges in real-time data processing at scale in distributed environments.

This session aims to show the potential of streaming data analysis and how to leverage on Apache Spark with Structured Streaming to extract value from it without taking care of common problems of streaming processing at scale already solved by Apache Spark.

Duration: 2 days.

Prerequisites: Python.

#bigdata #dataengineering #dataframework #apachespark

Teachers: Sarah Wolf, Andreas Geiges — Global Climate Forum

To understand possible transitions of complex systems (like e.g.societies, markets, systems of socio-technical co-evolution) pure data analysis might not be sufficient because such transitions often imply substantial shifts that can hardly be described by pure statistical data extrapolation. Therefore, modelling activities can be a useful complement to data analysis.

This workshop introduces an agent-based model, which is based on synthetic populations, for the global challenge of how to make mobility more sustainable. It illustrates the methodological approach of agent-based modelling, discusses how the process of model development can be accompanied with stakeholder dialogues, explores the interaction between such an agent-based model and the relevant data science tools, and provides some hands-on exercises.

Duration: 2 days.

Prerequisites: basic knowledge of Python

#datascience #complexsystems #agentbased #mobility #sustainability

Teachers: Salvatore Iaconesi, Oriana Persico — Human Ecosystems Relazioni

We constantly generate data, whether we realize it or not, whether we want it or not, and a very limited number of subjects has access to all of this data. This is a very serious condition, with enormous implications for our fundamental rights and freedoms, and for our opportunities to prosper, create, express, relate and live a just, inclusive, constructive life.

In this session we explore technologies for cultural acceleration through data: Human Ecosystems to create large scale, participatory data collection processes; Ubiquitous Commons for distributed, blockchain supported data-rights and evolved data-ownership patterns; Generative Open Data as accessibility layer for shared data commons.

This is a hands on session in which profound theoretical concepts emerge from technological architectures themselves and through the ways in which we will use them. It will be mainly focused on Network Science and the ways in which we can use it to gain better understandings of the city’s Relational Ecosystem between people, organizations, network connected objects, sensors and more.

We will see and understand how to use the platforms, and explore a practical case study: Bologna’s TDays, the limited traffic week-ends in the historical center of Bologna. We will figure out together how possible ways in which to transform them into a data-driven, inclusive, engaging opportunity for participatory citizenship, by using the platforms, social networks, art and design.

Duration: 1 day.

Prerequisites: Some familiarity with databases, to browse and export data from them, Python and/or JavaScript.

#networkscience #socialscience #territory #city #citizenship

Teacher: Simone Marzola — Oval Money

In the era of multi-tiered big-data infrastructures, data is commonly spread in multiple datasources and duplicates are everywhere. As a data scientist you’ll need to focus on consolidation of data to improve the data quality and build comprehensive data assets, through a process called data deduplication.

This sessions aims to show how data analysis tools for Python, like Pandas and NumPy, can be used to solve the deduplication problem in very large datasets. The proposed method includes data preprocessing and cleaning, comparison, indexing and classification.

We will use an anonymized subset of Oval Money user transactions to match duplicates and detect recurring transactions.

Duration: Half day.

Prerequisites: Python, Pandas, NumPy.

#bigdata #deduplication #classification #finance

Teachers: Isabella Iennaco, Paolo Raineri — KNOWAGE (Engineering S.p.A)

Business analytics lecture on a real KNOWAGE use case of predictive maintenance with an Open Source full stack!

The lecture is an interesting journey around KNOWAGE data visualization and data discovery capabilities and how they work in practice. The teacher will guide you towards a comprehensive understanding of KNOWAGE suite and allowing you to explore a large Industry 4.0 business project.

Duration: Half day.

Prerequisites: Python, JavaScript.

#mongodb #realtime #scaling #mapreduce #predictivemainteinance

Teachers: Andrè Panisson, Alan Perotti — ISI Foundation

This in-depth part of the course allows to build an appealing and diversified Machine Learning portfolio. It starts with a Machine Learning introduction and application with Scikit-learn, and continues with Neural Networks and backpropagation lectures where you’ll start exploring Computer Vision techniques on a dataset of images.

Deep Learning methods. You’ll be challenged to use TensorFlow and Keras on a image classification real cases (such as distracted drivers, healthcare or plant diseases). The workshop ends with lessons in Transfer Learning and one last project building your data set by scraping Google images and practicing everything you learned.

Duration: 3.5 days.

Prerequisites: Python, Pandas, Statistics, exposure to Machine Learning is welcome.

#machinelearning #deeplearning #neuralnetworks #scikitlearn #tensorflow

Teacher: Alexandre Lissy — Mozilla

DeepSpeech is an open source Speech-To-Text engine, using model trained by machine learning techniques, based on Baidu’s Deep Speech research paper.

You will learn how the model works, and how this was implemented using TensorFlow. The workshop will cover how we went from a PoC hack to a model that we try and make usable in production and how we leverage the distributed training system. We’ll explore how the inference-specific model is being built and the code around to make it run on several devices, and the tooling from TensorFlow we explored to try and speedup things.

We also present the Common Voice project, aiming at collecting open dataset for machine learning and more specifically voice-targetted machine learning.

You’ll be able to contribute to both project: how to train your own model for DeepSpeech, how to use DeepSpeech as a “blackbox”, how to hack into DeepSpeech, and how to contribute to Common Voice.

Duration: Half day.

Prerequisites: Python, shell, exposure to C++ is welcome.

#machinelearning #deepspeech #voicerecognition #tensorflow

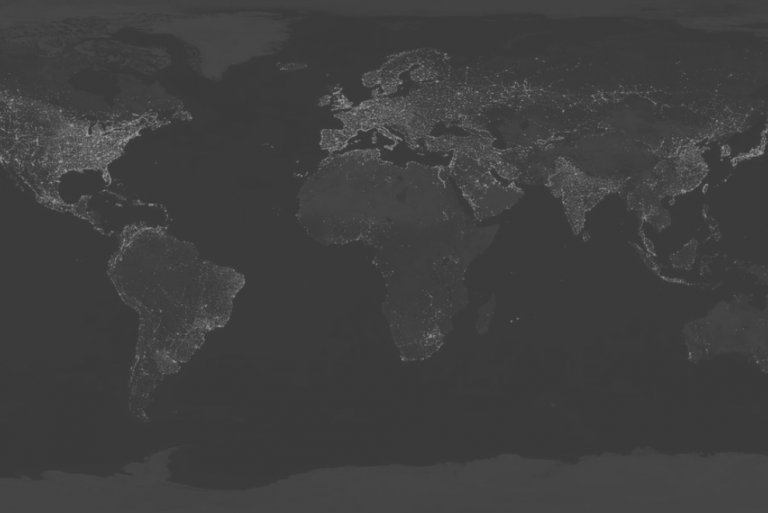

Teacher: Maurizio Napolitano — Fondazione Bruno Kessler

The workshop starts with an introduction to the GIS world, the geospatial protocols and the available geodata resources.

It continues diving in the OpenStreetMap ecosystem where we explore how it can be used as a great tool for data scientists. After the examples of analysis on real cases, you’ll be challenged to make your own geospatial project supervised by the expert Maurizio Napolitano.

Duration: 1 day.

Prerequisites: Python, previous experience with OpenSteetMap is welcome.

#geospatial #map #opendata #osm

During the four weeks of the BIG DIVE 7 the lessons and activities took place from Monday to Friday from 9:30am to 4:30pm. Additional time was reserved for special lectures, exercises and “homeworks”.

The last week of the course was dedicated to a final project.

Application process

Here’s a timeline of what happened:

| March 5th | BIG DIVE 7 – Registrations opening |

| Apr 8th | Early-bird expiring |

| June 3rd | BIG DIVE 7 – Registration closing |

| From June 18th to July 13th | BIG DIVE 7 |

| July 13th | BIG DIVE 7 closing event |

The application process started with a self-evaluation of the prerequisites (mostly related to your programming skills and Maths background) needed to access and fully enjoy the course. The course is offered in English, that’s why a fluent English is included in the prerequisites. Optional skills were taken in consideration to create a balanced classroom.

In the form you can tell us more about you, your previous experiences and why we should choose you. We really encourage you to make a short video to stand out among the other candidates!

When you finally press ‘send’ it comes the waiting part. After two weeks from registrations opening our team started screening the received applications. Candidates might be contacted by the organizers and asked to provide more information about skills or to attend an interview (in person or using a remote audio-video communication tool).

The selection process continued till the official registration closure in order to create progressively a class of maximum 20 Divers.

Applicants selected before the official end of registration were asked to pay a deposit (20% of the total due fee – according to the profile). In case of missing deposit (deadline is one week after the request) the candidate lose the priority in the selection queue.

In case a selected candidate renounces to participate, a new Diver is selected.

The deposit is not refunded in case of waiver communicated after the closure of registration.

All the news about selection, exclusion and deposit request are communicated by email through the email address inserted in the application form.

We understand the need to organise your working schedule and Summer activities in advance, so we do our best to get back to you with the final response as soon as possible.

Pricing

The price includes |

The price does not include |

|

|

The price differs based on the category you fit in:

Student |

Non-profit |

Regular |

| With proof of full-time student status at the time of application (Phd Students are also eligible for this profile) | With proof of full-time / part-time employment at a non-profit organization at the time of application | If you are not eligible for Student or non-profit profiles |

|

Early Price (till Apr 8th) |

Early Price (till Apr 8th) |

Early Price (till Apr 8th) |

| Full Price (till June 3rd) € 950 |

Full Price (till June 3rd) |

Full Price (till June 3rd) |

Until April 8th can have access to the early-bird price.

A pleasure not only for the mind…

The venue for the course is a recently renovated space in a beautiful historical building in the center of Turin close to the river.

Turin is one hour from the Alps and two hours from the seaside.

By train you can easily travelling among Milan, Florence, Rome, Venice to celebrate after (or during) the BIG DIVE 7 with your classmates!

Guarantee

The deposit is not refunded in case of waiver communicated after the closure of registration.

We do not refund unused portion of the training. However, refunds are handled on a case-by-case basis.

A copy of your University ID card or any certificate proving you are a student at the time of application. You can send it at info.bigdive@top-ix.org after you filled up the form online.

Must-have requirements to be admitted are:

[1] Background in Descriptive Statistics (mean, median and mode, standard deviation,…).

[2] Python fundamentals (syntax, control flow, functions,…).

[3] Command line basic experience.

[4] Basics of database management.

[5] Git version control system (a personal account on GitHub is required).

[6] As BIG DIVE will be taught in English, proper conversation and writing skills are required.

Please write us to info.bigdive@top-ix.org for any clarifications.

No, your presentation video and letters of recommendation are not mandatory.

We encourage you do add them to your application to get know you and your motivation better and better evaluate your profile.

The course is designed to be fully-attended in Turin, Italy.

During the first three weeks you’ll focus on studying real cases and tutorials, while in the final week you’ll be part of a group to develop the final data science dedicated to a project.

Yes, at the end of the course you’ll receive a certificate of attendance if you take part at more than 85% of the lesson and complete the final project with your group.